I. Introduction

OpenAI GPT-3 is trending in the world of tech. OpenAI’s GPT-3 (Generative Pre-trained Transformer) has made headlines for its remarkable ability to generate text that resembles humans. The model can compose essays, articles, emails, and even source code. Many believe that GPT-3 is one of the most advanced AI models currently available based on its powers. However, as with any new technology, there are frequently unrealistic expectations. This article will explore the capabilities and limitations of the GPT-3 and other types of openAI GPT. We will also examine the GPT-3 expectation vs reality aspect to determine if it lives up to the hype.

This is the biggest thing that’s happened in months.

— Aman Jha (@amanjha__) December 15, 2022

Seriously, 80% of the ideas I come up with use embeddings, not regular LLMs. Now it’s affordable. pic.twitter.com/y6FovKEIhu

II. What is GPT-3?

Modern AI language model GPT-3 (Generative Pre-trained Transformer) was created by OpenAI. It can produce text that closely resembles human writing with high coherence and fluency because it has been trained on enormous amounts of text data. It can make the text for various tasks, including coding and writing essays and emails.

A Brief overview of GPT-3:

GPT3 is a neural network-based text generation model that employs deep learning. It has 175 billion parameters, making it one of the most extensive language models accessible. It can generate text in various styles and forms and may perform tasks for which it has yet to be expressly trained.

The Technology behind GPT-3:

GPT-3 is based on the transformer architecture, a kind of neural network first described in the 2017 book “Attention Is All You Need.” The model can comprehend the input context thanks to the transformer architecture’s ability to handle sequential data, like text. OpenAI GPT-3 can produce cohesive and fluid language because it has already been pre-trained on a vast amount of text data. Unsupervised learning is used, in which the model is trained on a large dataset without labels. It can then use these patterns and features to produce text by learning them from the data.

III. OpenAI GPT-3’s Capabilities

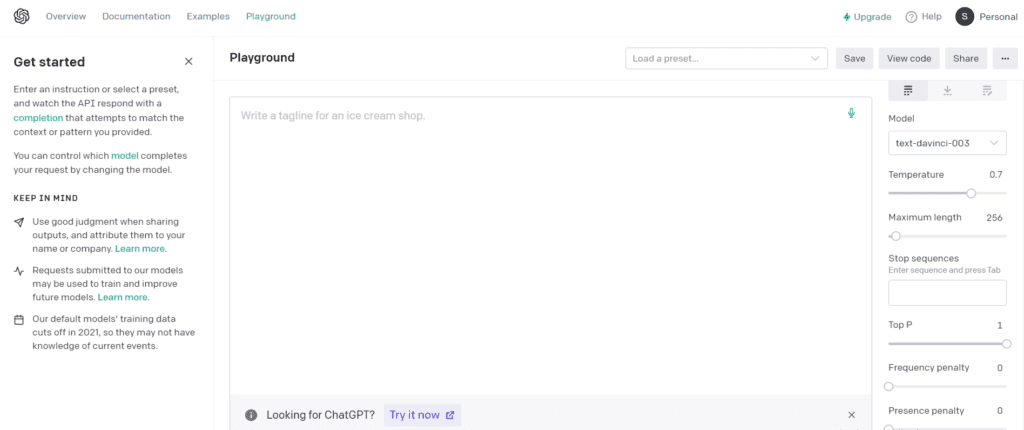

GPT-3 has a wide range of capabilities, thanks to the massive amount of text data it was trained on. The OpenAI GTP 3 Playground has some extra options and abilities. Some of its most notable abilities include:

Text generation:

GPT3 can generate text in various styles, including essays, articles, emails, and code. Tasks like question answering and text summarization that it was not explicitly taught to do are also within its capabilities.

Language understanding:

GPT3 has a high level of language understanding, thanks to its ability to grasp the context of the input. This allows it to generate text that is coherent and fluent.

Chatbot:

OpenAI GPT3 can be used to create chatbots that can hold a conversation with users. It can understand the user’s intent and respond appropriately.

Language Translation:

GPT3 can also perform language translation to a certain extent, by understanding the context of the text and generating a new text in the target language.

Examples of GPT-3’s abilities:

- GPT-3 can write essays and articles on various topics like technology, science, and history.

- GPT-3 can compose emails, with a high degree of coherence and fluency.

- GPT-3 can generate code in various programming languages, such as Python and JavaScript.

- GPT-3 can also perform simple mathematical calculations and answer trivia questions.

Limitations of GPT-3:

- OpenAI GPT-3 has several functional limitations and is not without faults. It is training on a vast amount of text data, though the data is only sometimes accurate or objective, which is one of its main drawbacks. As a result, GPT-3 occasionally produces biased or factually incorrect text.

- GPT-3 also lacks common sense, it can’t understand the physical world around it, and can’t reason about it.

- GPT-3 is not good at understanding sarcasm and irony, it can often misinterpret these elements in the text.

- GPT-3’s abilities are primarily focused on text generation, and it may not perform well on other types of tasks, such as image or voice recognition.

IV. GPT-3 in Comparison to Other AI

GPT3 is one of the most advanced AI models that exist at this time, although it is not the only one. Google Duplex, a chatbot that can converse with users in a human-like manner, is one of the most renowned AI models.

GPT3 features greater capabilities and a more generalised approach than Google Duplex. However, Google Duplex is more advanced in terms of speech interpretation and synthesis, and it can make phone calls on behalf of the user.

GPT3 is a major player in natural language processing in the present landscape of artificial intelligence (NLP). Its capacity to generate cohesive and fluent writing is unrivalled compared to competing approaches. Nevertheless, further AI models excel in other domains, such as picture and voice recognition.

The Role of companies such as OpenAI and NVIDIA in AI development:

OpenAI is a research company that creates and supports friendly artificial intelligence. OpenAI created GPT-3 and continues to develop further AI projects. OpenAI’s mission is to ensure the development of AI responsibly and securely.

NVIDIA is a specialised technology business that produces graphics processing units (GPUs). GPUs from NVIDIA are utilised in AI research and development due to their ability to expedite the training of huge AI models such as GPT-3. NVIDIA also offers an AI development platform called NVIDIA Deep Learning Platform, which provides AI developers with tools and resources.

OpenAI and NVIDIA contribute significantly to developing and promoting AI technology by providing researchers and developers with the necessary tools and resources to advance the field.

V. GPT-3’s Impact on the Industry

GPT3 is utilised in numerous fields, including finance, healthcare, and customer service. We are using this to evaluate massive volumes of data and generate reports for the banking industry. Moreover, we use this in healthcare to aid in medical research and produce patient-specific treatment regimens. GPT3 is used to power chatbots that can assist consumers in a human-like manner in customer care.

One of the potential effects of GPT3 on the employment market is that it could automate human-performed tasks formerly. For instance, GPT3 might be used to generate reports and summaries previously generated by data analysts. The natural language processing capabilities of GPT3 could also be utilised to automate certain customer care duties.

However, it is essential to clarify that GPT3 is not intended to replace programmers or other specialists. Instead, it is intended to facilitate their work. GPT3 is a tool that can generate code, compose documents, and perform other activities, but human inspection and interaction are still necessary to guarantee that the output is accurate and suitable.

It is also essential to note that GPT3 is only a comprehensive answer for some activities; many tasks still require human participation, comprehension, and inventiveness.

In conclusion, GPT-3 can influence multiple industries by automating specific jobs. But it is not intended to replace human specialists. It should instead be viewed as a tool that can aid people in their work.

VI. GPT-4: Expectations and Reality

OpenAI has not made any public announcements concerning GPT-4. Hence there currently needs to be more information available. Still, GPT-3’s progress suggests that GPT-4 will have even more features and enhancements.

Natural language processing that is even more sophisticated, task generalisation, and increased efficiency are all things that are hoped to be realised in GPT-4. It has also been hypothesised that GPT-4’s model sizes and computational power would increase. It is expected that GPT-4 would enable the model to generate writing and dialogue that is indistinguishable from that produced by a human.

It’s impossible to know what GPT-4 will be capable of before OpenAI formally announces it, so take these rumours with a grain of salt. Whether or not OpenAI is engaged in developing GPT-4 remains unknown.

It’s also important to remember that even if GPT-4 is released, it may not be the “most advanced” AI because AI is a rapidly developing subject where new advances are constantly being made. Like GPT-3 before it, GPT-4 will have limitations and require human input, insight, and creativity to be fully utilised.

In conclusion, while the precise capabilities of GPT4 remain unknown, it is safe to assume that they will exceed those of GPT3. However, it isn’t easy to estimate what GPT4 will be capable of because AI is continually growing, and new advances are being made all the time.

Asking #chatGPT to give the size of #GPT4!

— CrazyTimes (@CrazyTi88792926) December 22, 2022

GPT-4 is a large language model developed by OpenAI that has 175 billion parameters. This is significantly larger than the number of parameters in previous versions of the GPT model, such as GPT-3, which also has 175 billion parameters. pic.twitter.com/PJyi7n7cVj

VII. Conclusion

In this post, we dig into the OpenAI-created GPT-3 model and its capabilities. The technology behind GPT-3 has been summarised, along with some examples of its strengths and weaknesses. We discussed where GPT-3 fits in the larger AI ecosystem and provided comparisons to other AI models like Google Duplex.

In addition, we discussed how GPT-3 could affect different sectors of the economy and the jobs market. Finally, we discussed GPT-4, which is currently a matter of discussion despite widespread anticipation that it will be far more sophisticated than GPT-3.

GPT-3 is a powerful AI model capable of many tasks. It will still require human involvement, knowledge, and creativity to be effective, so keep in mind that it is not a panacea for all labour. Additionally, AI is a dynamic field wherein frequent improvements are achieved. So, there may be a difference between what is hoped for and what GPT-3 and other models deliver.

FAQs

The recommended amount of RAM for GPT-3 is 16GB, but we recommend at least 32 GB. Nonetheless, 32GB or more is recommended to ensure GPT-3’s smooth operation.

GPT-3 is considered one of the most powerful AI models that can be used now. It has shown that it can understand and create natural language at a high level.

GPT-3 is built on the PyTorch framework and is trained using the Python programming language.

GPT-3 is one of the most advanced AI models regarding how well it understands natural language. But other AI models are very good at voice and image recognition.

The term “smartest AI” is subjective because it depends on the specific task or problem being addressed by the AI. GPT-3 is regarded as one of the most advanced AI models for processing natural language, but other AI models excel in other areas.

GPT-3 has been trained to work well with many kinds of text, including code. However, it is not intended to write code in the same manner as a human developer. It is possible to produce code snippets, but they will need to be reviewed by a person to ensure that it is proper and comprehensive.

While GPT-3’s natural language creation capabilities are impressive, the tool is not meant to take the position of human programmers. Helpful for things like code generation, but human review is still needed to ensure everything works as intended.

Do you want to read more? Check out these articles.

11 comments